After becoming the module chair of TM354 Software Engineering, I had a look at two related postgraduate modules, M813 Software Development and M814 Software Engineering.

These two modules sit alongside a number of other modules that make up the MSc in Computing programme. My intention was to see what related topics and subjects are taught, and whether there were any notable differences about how they were taught.

This blog aims to highlight some of the key elements of these modules. To prepare this post, I had a good look through the module materials, including the assessment materials, and spoke with each of the module chairs. My intention of looking at these modules is to identify what themes and topics might potentially feed into a future replacement of TM354, or another related module. This summary is by no means comprehensive; the points I pick up on do, of course, reflect my interests.

I hope these notes are useful to anyone who is interested in either software engineering, or postgraduate computing, or both. Towards the end of the blog, I share a quick compare and contrast between the two modules and share some links to resources for anyone who might be interested.

M813 Software Development

M813 aims to “to provide the skills and knowledge necessary to develop software in accordance with current professional practice, approaches and techniques”.

The key module learning aims are to:

- teach you a variety of fundamental techniques for software development across the software lifecycle, and to provide practice in the use of these techniques

- give you enough knowledge to be able to choose between different development techniques appropriate for a software development context

- make you aware of design and technology trade-offs involved in developing enterprise software systems

- enable you to evaluate current software development practices

- give you an understanding of current and emerging issues in software development

- give you the research skills needed to stay at the leading edge of software development.

The module description suggests that students “will have an opportunity to engage with an organisational problem of your choice, working towards a fit-for-purpose software solution” and students “will also have an opportunity to carry out some independent research into issues in software development, including analysing, evaluating and presenting results”.

It makes use of a set text, Head First Design Patterns, accessed through the university library. To help students with the more technical bits, it shares some resources about a graphical tool, Visual Paradigm, which enables students to create diagrams using the Unified Modelling Language (UML).

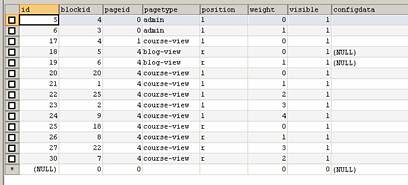

The module has 10 units of study, which are spread over four blocks. The module’s assessment strategy summarised below, followed by each of the blocks.

Assessment strategy

Like many other modules, there are two parts of assessment: tutor marked assessments (TMAs), and an examinable component, which is an end of module assessment (EMA). Interestingly, the TMAs adopt a more practical and software development skills perspective, whereas the EMA is more about carrying out research which is applied to a study context. To pass the module, students need to gain an average score of 50% in both of the components.

TMAs 1 and 3 account for 30% of the continually assessed part of the module. Due to the practical focus of TMA 2, this assessment accounts for 40% of the overall TMA score.

Block 1: Software development and early lifecycle

This block is described as helping to “learn the principles and techniques of early software lifecycle, from requirements and domain analysis to software specification. You will engage with a number of practices, including capturing and validating requirements, and UML (Unified Modelling Language) modelling with activity and class diagrams.”

The model opens with a research activity which involves finding and reading academic articles. There are three other research activities which build on this first searching activity. These activities helps students to understand what the academic study of software engineering looks like. Plus, when working as a practicing software engineer, it’s important to know how to find and evaluate information about methods, approaches, and frameworks.

This unit beings to introduce students to a tool that they will use during the module; Visual Paradigm. Throughout the module, students will learn more about different UML diagrams, such as use cases, class diagrams, and activity diagrams.

Unit 1, introducing software development, shares a couple of perspectives: a philosophical perspective and a historical perspective (history is always useful), before mentioning risk, quality and then moving onto starting to look at UML.

Unit 2, requirements and use cases, covers the characteristics of requirements and the forms that they can be presented. Unit 3, from the context to the system, starts with activity diagram (which are all about representing a context) through to class diagrams, which is all about beginning to realise a design of software using abstractions. Finally, unit 4, specifying what the system should do, touches on more formal aspects of software specification.

Block 2: Design and code

This next block explores “principles and techniques of software design, construction, testing and version control”. Other topics include design patterns, UML modelling with state diagrams and creating of software using the Java language. Out of all the blocks in the module, this is the one that has a really practical focus.

In addition to links to further video tutorials about Visual Paradigm, there’s some guidance about how to start to use Microsoft Visual Studio Code, and some initial development activities.

Unit 5, design, introduces some basic design principles, and new forms of diagram: communication diagrams and object diagrams. Unit 6, from design to code, shares a bit more detail about the principles of object-oriented programming, and goes onto introducing the topic of configuration management. Unit 7, design patterns, continues the theme of object-oriented programming by introducing a set of patterns from the Gang of Four text, which is complemented by a software development activity.

Block 3: Software architectures and systems integration

Block 3 goes up a level to explore how to “develop software solutions based on software architectures and frameworks”.

Unit 8, software architectures introduces the notion of architectural patterns, and how to model patterns using UML. Another useful topic introduced is state machines. An important theme that is highlighted is the idea of layer of software which, in turn, is linked to the notion of persistence (which means ‘how data can be saved’). This is complemented by unit 9, component-based architectures, which offers a specific example. The module concludes with unit 10, service-oriented architectures.

Block 4: EMA preparation

This fourth block relates to the module’s end of module assessment (EMA), where students have to carry out some applied research into a software context in which they are familiar with. To help students to prepare, there are some useful preparatory resources.

Reflections

I really liked that this module brings in a bit of history, describing the history of object-oriented programming. I also liked that it shared some really useful descriptions about the differences between scholarship and research. There are some common elements between M813 and TM354, such as requirements and the use of UML, but I’ll say more about this in a later section.

M814 Software Engineering

M814 is “about advanced concepts and techniques used throughout the software life cycle” and replaces two earlier 15 point modules: M882 Software Project Management and M883 Requirements Engineering.

The module aims are to:

- develop your ability in the critical evaluation of the theories, practices and systems used in a range of areas of Computing

- provide you with a specialised area of study in order that you can experience and develop the frontiers of practice and research in focused aspects of Computing and its application

- encourage you, through the provision of appropriate educational activities, to develop study and transferable skills applicable to your employment and continuing professional development

- enable you to develop a deeper understanding of a specialist area of Computing and to contribute to future developments in the field.

Although this module is less ‘applied’ than M813, there are some important elements. Students make use Git and GitHub, and use a simulation and modelling tool, InsightMaker.

The module has four study blocks, containing 26 study units; a lot more than M813. These are summarised in the following sections. Students are also required to consult a set text, Mastering the requirements process by Robertson and Robertson, which is also available through the OU Library.

Assessment strategy

The module has three TMAs and an end of module exam, which is taken remotely (as opposed to an EMA). TMAs 1 and 3 have a weighting of 30% each, with TMA 2 being slightly more substantial, accounting for 40%. Students have to pass both the TMAs and the exam, gaining an average of 50% in each.

The exam covers all module learning outcomes and is split into two sections. For the second section students would have needed to be familiar with a research article.

Block 1: Software engineering context

The first two units, unit 1, software in the information society and unit 2, the organisational and business context, introduces software engineering. This is followed by an introduction to the organisational context through unit 3, organisational context, codes and standards. The title of this unit refers to professional codes, and professional and technical standards. Accompanying topics include software and the law, which includes intellectual properly, trademarking, patents, and data protection (GDPR) legislation. The final unit, unit 4, addresses ethics and values in software engineering.

Block 2: Software engineering methods and processes

Block 2 concerns software engineering methods and processes. The first two units highlights the notion of the process model, project management, and quality management, which includes the ISO 9001 standard and the Capability Maturity Model (CMMI). These are presented in unit 6, software activities and unit 7, software engineering processes.

The module then covers unit 8, agile processes and unit 9, managing resources, which includes materials about SCRUM, Kanban, and something called the SAFe framework, which is a set of workflow patterns for implementing agile practices. There is also a case study which describes how agile is used in practice. I remember seeing some photographs that show how developers have been sharing information about project status using whiteboard and other displays. The module concludes with unit 10, managing uncertainty and risk, and unit 11, software quality.

A part of this block makes use of simulation, introducing a ‘simulation modelling tool’ which can be used to experiment with the concept of Brooks’ law. As an aside, this reminds me of a short article that touched on a similar topic. In the context of M814, I like how the idea of simulation has been applied in an interesting and pedagogically helpful way.

Block 3: Software deployment and evaluation

Block 3 concerns software deployment and evolution. In other words, what happens after implementation. It includes some materials about DevOps (the integration of development with the operation of software), and continual integration and delivery. There are three units: unit 12, software configuration management, which introduces Git and GitHub, unit 13, software deployment, and unit 14, software maintenance and evolution.

This block returns to simulation, specifically exploring Lehman’s 2nd law (Wikipedia), which means that software complexity increases unless something is done to reduce it. Students are also directed to a text book, Continuous Delivery: Reliable Software Releases through Build, Test, and Deployment Automation, by Humble and Farley.

Block 4: Back to the beginning

The final block returns to the beginning by looking at requirements engineering, extensively drawing on the set text, Mastering the Requirements Process. It introduces what is meant by requirements engineering, a subtopic within software engineering. Unit titles for this block includes scoping the business problem, functional and non-functional requirements, fit criteria and rationale, ensuring quality of requirements, and reusing requirements. The block concludes with a useful section: unit 26, current trends in software engineering.

Reflections

I really liked the introductory sections to this module; they adopt a philosophical tone. I also really like how it uses case studies. What is notable is that there are a lot of materials to get through, but all the topics and units are certainly appropriate and are needed to cover the module in a good amount of depth.

Similarities and differences

There is understandably some cross over between M813 and M814; they complement each other. M813 is more of an ‘applied’ module than either M814 or TM354, but M814 does contain a few practical elements. It’s use of simulations is particularly interesting. In comparison to the undergraduate software engineering module, TM354, the two postgraduate modules do clearly require the application of higher academic skills, such as understanding what it means to carry out scholarship.

In my opinion, there appear to be more similarities between M813 and TM354 than with M814. It is worth noting that TM354 introduces topics that can be found in both postgraduate modules.

TM354 and M813 both emphasise design patterns. An important difference is that in M813, students are required to demonstrate how patterns might be applied, whereas on TM354 students have to necessarily demonstrate their understanding of design patterns that have been chosen by the module team. Both modules also explore the notions of software architectures and state machines.

There are differences between TM354 and M813 in terms of tools. TM354 steers away from the use of diagramming tools, but by way of contrast, M813 makes extensive use of Visual Paradigm. TM354 makes use of NetBeans for the design patterns task, whereas M813 introduces students to Visual Studio Code.

By way of contrast, M814 covers wider variety of concepts which are important to the building of ‘software in the large’; the importance of software maintenance and the characteristics of software quality.

UML is featured in all three modules. They all refer to software development methods and requirements engineering. Significantly, they all use the Roberston and Robertson text. The differ in terms of the depth they explore the topic.

To conclude, software development and software engineering are huge subjects. The three modules that are mentioned in this blog can only begin to scratch the surface. Every problem will have a unique set of requirements, and every problem will require different methods. There are two key elements: people and technology. Software is designed by people and used by people. Where there’s people, there’s always complexity. Adding technology in the mix adds an additional dimension of complexity.

Resources

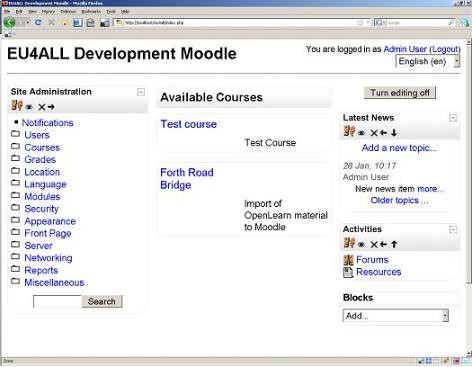

The following links takes you to some useful OpenLearn resources:

- Succeeding in postgraduate study

- A sample of TM354 module materials: Approaches to software development

- A sample of M813: An introduction to software development

- A sample of M814: Software and the law

Acknowledgements

Many thanks to Arosha Bandara who spent some time introducing me to some the key elements of M814. I also extend thanks to Yujin Yu. Both Arosha and Yujin are professors of software engineering. The current chair of M814 is Professor Andrea Zisman, who is also a professor of software engineering. Thanks are also extended to the TM354 module team: Michael Ulman, Richard Walker, Petra Wolf and Andrea Zisman.